Everyone wants AI in their product now. The use cases are real and the technology has caught up. But the first technical decision many teams get wrong is also the most expensive one: should we fine-tune a model, or build a RAG system?

We've built both. They are not interchangeable. Choosing wrong doesn't just waste money - it can waste months. Here's how to choose right the first time.

What they actually are

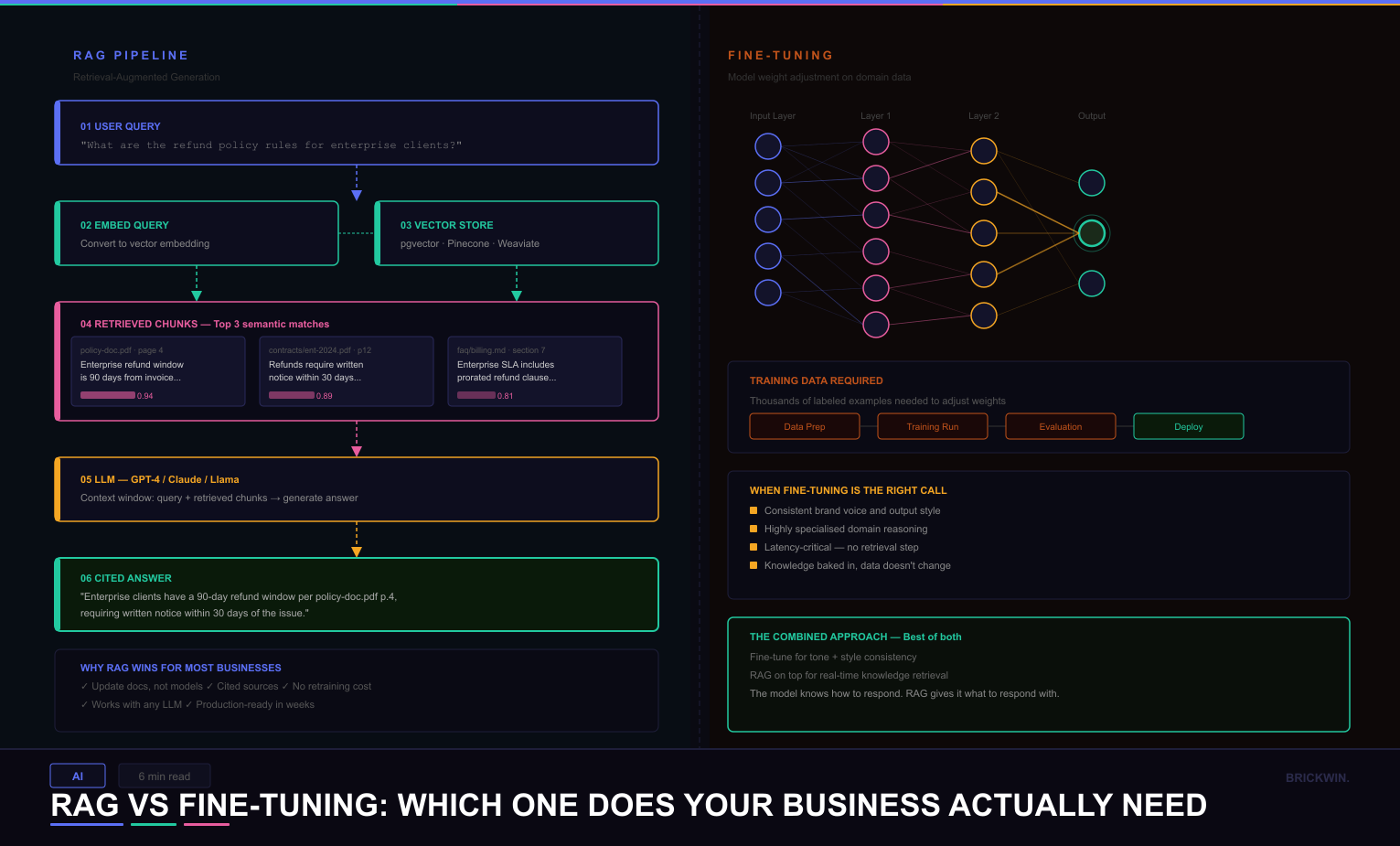

Fine-tuning means taking an existing model - GPT-4, Llama, Mistral - and retraining it on your specific data. The model learns your tone, your terminology, your patterns. It becomes a version of itself that has absorbed your knowledge at the weights level.

RAG (Retrieval-Augmented Generation) means keeping the base model untouched and giving it a memory system. When a user asks a question, the system searches your documents, pulls the most relevant chunks, and feeds them to the model as context. The model answers using what it just retrieved.

Same goal. Completely different architecture. Completely different tradeoffs.

When RAG is the right answer

RAG works well for most business use cases involving documents, knowledge bases, or data that changes regularly. Product documentation, legal contracts, support knowledge bases, internal policies - if this content gets updated, RAG handles it cleanly.

Fine-tuning bakes knowledge into the model at a point in time. When your documentation changes, you retrain. When policies update, you retrain. With RAG, you update your document store and the model has access to new information on the next query. No retraining, no downtime.

RAG also produces more trustworthy answers because they're grounded in retrieved source documents - you can cite exactly where an answer came from. In legal, compliance, and finance contexts, that's often a requirement, not a nice-to-have.

When fine-tuning is the right answer

Fine-tuning earns its complexity in specific cases. If you need the model to always write in a particular brand voice - specific sentence structure, specific vocabulary - fine-tuning is the most reliable way to embed that deeply. Prompt engineering gets you most of the way there. Fine-tuning handles the rest.

It also makes sense for highly specialised domain reasoning where the base model's general knowledge isn't sufficient, and for latency-critical applications where RAG's retrieval step adds too much time.

The most common mistake

Fine-tuning is often reached for first because it sounds more thorough. More custom. But for most business problems, it's solving the wrong thing - expensively. The information lives in documents, not in training data, and RAG retrieves it more accurately with a fraction of the setup time.

Fine-tuning is not a premium upgrade from RAG. It's a different tool for a different problem. Most business problems are RAG problems.

Can you use both?

Yes - and for complex systems, you should. Fine-tune for tone, domain vocabulary, and output format. Build RAG on top for real-time knowledge retrieval. The fine-tuned model knows how to respond. The RAG layer gives it what to respond with.

The question isn't which technology is better. It's which problem you're actually solving. If your data changes, use RAG. If your style needs to be consistent, fine-tune. If you need both, combine them - but build the RAG layer first.